The Orchestration Engine for Modern AI

Kubernetes (K8s) is an open-source system for container orchestration, running applications packaged in containers at scale. It automates deployment, scaling, and day-to-day operations so applications stay available as infrastructure changes.

First created at Google to manage its own large-scale services, Kubernetes has since become the most widely adopted platform for orchestrating containers in environments ranging from small teams to massive data centers. Its growth has been driven not just by technology but by the strength of its community under the Cloud Native Computing Foundation (CNCF), with events like KubeCon serving as hubs for innovation and best practices.

While Kubernetes is widely used for orchestrating microservices, web applications, and data processing pipelines, its role in artificial intelligence (AI) and machine learning (ML) workloads has become increasingly critical. Advanced AI workloads, such as training large models or serving inference at scale, demand massive compute power across clusters of GPUs, and they need to scale dynamically as demands grow.

By abstracting away infrastructure complexity, Kubernetes allows teams to run compute-intensive jobs more reliably. In AI contexts, this means efficiently scheduling GPU resources, distributing training across clusters, and keeping inference services resilient under heavy demand.

Kubernetes architecture and core components

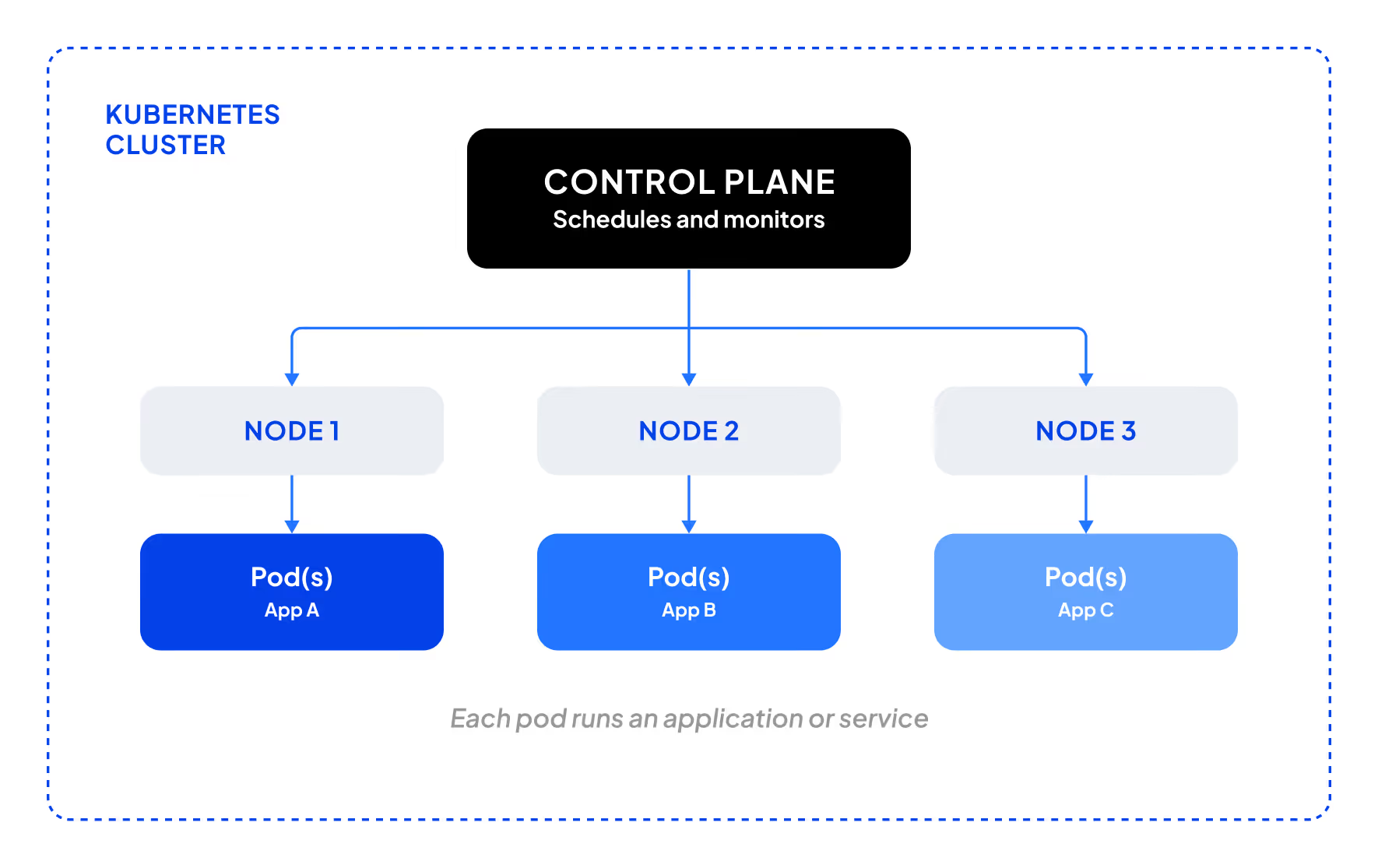

Kubernetes is built on a set of core concepts that work together to orchestrate containers across distributed environments. These building blocks are fundamental to how Kubernetes manages scale, availability, and reliability.

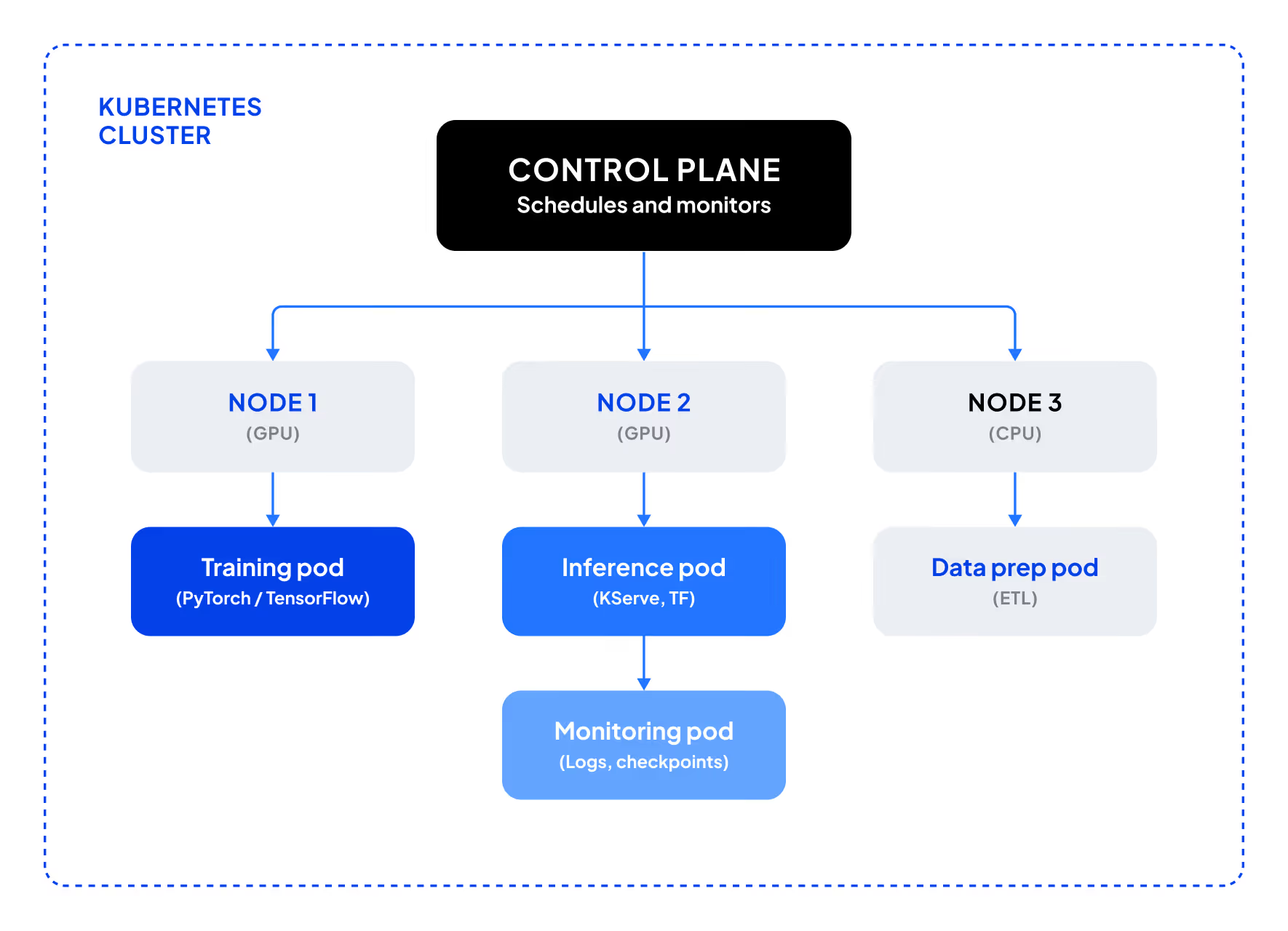

This model is what makes Kubernetes so widely adopted across industries. The control plane abstracts away infrastructure complexity, nodes provide the underlying compute, and pods package applications consistently. Together, these elements allow teams to run everything from web apps to data pipelines with the same reliability, scalability, and portability. While the same architecture concepts apply broadly, their impact becomes especially clear in AI and ML, where workloads are compute-intensive and often run across thousands of containers.

Core components of Kubernetes include:

- Pods: the smallest unit Kubernetes deploys; a pod can host one or more containers that share resources, making it easy to package tasks like a training worker, data preprocessing step, or inference service

- Deployments: keep the desired number of pods running, automatically replacing unhealthy or failing pods, providing redundancy and resiliency

- Nodes and clusters: nodes are the physical or virtual machines that run pods, and clusters are groups of nodes working together to provide scale and reliability; in AI and ML workloads, clusters supply the parallelism needed to distribute training across GPU-enabled nodes and to serve inference reliably under fluctuating demand

- Services and networking: provide stable communication between pods and external apps, with built-in load balancing and service discovery

- Control plane: the “brain” of Kubernetes, maintaining desired cluster state and scaling workloads as needed

Together, these components form a flexible system for running everything from simple web apps to complex AI pipelines across clusters of machines.

In practice, this architecture is what makes Kubernetes powerful for AI workloads at scale. For example, pods can be scheduled to GPUs for training, clusters distribute jobs across many machines, and the control plane ensures workloads recover automatically if a node fails. This safeguard is especially important for multi-day training runs or high-availability inference pipelines. Overall, these mechanics allow teams to train large models or serve production AI workloads reliably, even when thousands of containers are in play.

Kubernetes for AI workloads

While Kubernetes is widely used for microservices and web applications, it has become indispensable for many AI and Kubernetes machine learning workloads that require massive compute resources and dynamic scaling.

Kubernetes isn’t just for microservices. It powers some of the world’s most compute-intensive AI workloads, from distributed training to inference pipelines.

GPU scheduling and resource management

AI workloads are resource-intensive and often depend on GPUs, which are in high demand. Kubernetes supports GPU scheduling through device plugins, which allow pods to request specific GPU resources. This ensures jobs land on the right nodes and helps maximize utilization.

Distributed training across clusters

Training large AI models requires splitting work across multiple GPUs and nodes. Kubernetes enables this by distributing jobs across clusters, coordinating workload placement, and maintaining cluster health. With frameworks like PyTorch or TensorFlow, Kubernetes can orchestrate distributed training jobs efficiently. This is one of the ways modern container orchestration tools are enabling large-scale AI workloads.

Advanced strategy: Slurm and Kubernetes

Many AI teams use both Slurm and Kubernetes at scale. Slurm remains the familiar scheduler in research while Kubernetes adds resilience, lifecycle management, and automation. CoreWeave has standardized this hybrid model with its SUNK architecture, letting researchers keep the Slurm interface they know while benefiting from Kubernetes’ orchestration strengths.

Autoscaling inference pipelines

Inference, or running trained models in production, has elastic demand. In cloud environments, Kubernetes can automatically scale inference services up during peak traffic and back down when demand drops, ensuring responsiveness without overspending on idle resources. In on-premises clusters, autoscaling helps maximize fixed GPU capacity by shifting resources dynamically to where they are needed most.

Fault tolerance in long-running jobs

Many workloads run for extended periods, from data processing pipelines to batch simulations. If a pod or node fails during execution, Kubernetes automatically reschedules the workload, reducing the risk of wasted compute time.

This capability becomes especially important in AI training, where jobs may run for days or weeks and involve thousands of GPUs. By combining Kubernetes’ fault tolerance with checkpointing strategies that periodically save model state, teams can recover quickly from interruptions and keep large-scale training jobs on track.

Ultimately, Kubernetes provides the orchestration layer that makes large-scale AI possible in practice, from managing scarce GPUs to keeping services resilient under unpredictable demand.

Kubernetes use cases in AI

Kubernetes provides the orchestration backbone for a range of AI and machine learning workloads. As AI adoption accelerates, these are the scenarios where Kubernetes delivers the most impact.

Training large models: Distributing training jobs across clusters of GPU-enabled nodes to handle models with billions of parameters.

Scaling inference services: Running production workloads such as recommendation engines, fraud detection models, or generative AI applications that must scale up or down with demand.

Hybrid and multi-cloud deployments: Supporting flexible infrastructure strategies, such as bursting to the cloud for intensive training while maintaining steady inference on-premises.

Collaborative research and experimentation: Allowing multiple teams to share resources efficiently, with Kubernetes isolating workloads to maximize utilization and reduce conflicts.

Benefits of Kubernetes for AI and ML

The capabilities provided by Kubernetes make it a powerful tool for optimizing AI and machine learning workloads, especially those that need to scale beyond a single machine.

Scalability

AI workloads can grow quickly, from a handful of experiments to training massive models with billions of parameters. Kubernetes enables the seamless scaling of jobs across clusters of nodes, ensuring workloads expand smoothly as demand increases.

Efficient GPU/CPU utilization

Because GPU resources are essential to AI, high utilization is essential to maximizing budget. Kubernetes allows pods to request specific GPU and CPU resources, a key part of Kubernetes GPU scheduling that ensures workloads are assigned where needed. Autoscaling and workload isolation help teams make the most of every GPU hour.

Portability across hybrid and multi-cloud environments

AI teams often need to run workloads across a mix of environments: on-premises, cloud, or hybrid. Kubernetes abstracts the infrastructure layer so the same workloads can run consistently across different platforms, reducing vendor lock-in and enabling flexibility in resource planning.

Built-in resilience

From pod restarts to automatic rescheduling, Kubernetes ensures workloads keep running even when individual components fail. For long-running AI training jobs, this resilience minimizes disruption and helps protect against lost compute cycles. For example, CoreWeave extends Kubernetes’ fault tolerance with Mission Control, which detects and replaces bad nodes automatically.

By combining scalability, efficiency, portability, and resilience, Kubernetes enables AI teams to run larger and more complex workloads with greater confidence and cost control.

Challenges of running Kubernetes for AI at scale

Running Kubernetes for AI workloads introduces demands beyond typical web or enterprise applications. Training and serving models require massive compute resources across clusters of GPUs, and even small inefficiencies can waste budget or delay results. Here are some of the biggest challenges when using Kubernetes out of the box.

Complexity of setup and operations

Standing up Kubernetes clusters requires deep expertise in networking, security, and scaling. To reduce this overhead, many teams turn to managed Kubernetes services. Some providers design their environments specifically for AI, simplifying setup while optimizing GPU utilization.

Scenario: Node failure

On a hyperscaler cloud, if a node fails during a long training job, it may be rescheduled on the same faulty hardware, costing hours of GPU time. On an optimized AI Cloud, faulty nodes are automatically removed and workloads fail over to healthy nodes, minimizing disruption.

GPU bottlenecks and memory limits

Large models often exceed a single GPU’s memory. Without careful scheduling, workloads may crash or leave GPUs underutilized. Advanced approaches like GPU partitioning or topology-aware placement can help, but are difficult to implement without specialized expertise.

Networking constraints in distributed training

Multi-node training relies on fast interconnects. If Kubernetes schedules pods across nodes without considering topology, bandwidth bottlenecks can slow performance. Providers that combine Kubernetes with high-speed fabrics and topology-aware scheduling achieve far better efficiency.

Monitoring and fault tolerance overhead

Kubernetes reschedules failed pods, but AI jobs often need deeper visibility and checkpointing. Many teams add these tools themselves, but specialized AI-focused environments now build in observability and fault tolerance by default.

Advanced Strategy: Gang scheduling

For large training jobs, gang scheduling ensures all GPU resources are available before a job starts. This avoids wasted cycles by preventing jobs from starting until the cluster is ready.

Ecosystem trends and future outlook

The role of Kubernetes continues to expand in AI and HPC, but adoption patterns vary across the ecosystem. In academia and large research institutions, Slurm remains the dominant scheduler, reflecting familiarity with HPC-style batch workloads. By contrast, startups and AI labs increasingly favor Kubernetes-native container orchestration tools like Ray, Kubeflow, or Kube-batch, which provide job orchestration in a cloud-native way and integrate directly with MLOps workflows. Many organizations adopt a hybrid approach, running Slurm for large-scale training while relying on Kubernetes to serve models in production.

The convergence of HPC and cloud-native

As AI workloads grow more complex, the distinction between HPC and cloud-native approaches is narrowing. Hybrid models—such as Slurm-on-Kubernetes—are becoming more common, allowing teams to combine the efficiency of HPC scheduling with the resilience and automation of Kubernetes. Looking ahead, Kubernetes is likely to play an even greater role as the orchestration backbone for next-generation AI workloads.

.avif)