An AI cloud refers to cloud computing infrastructure specifically optimized for building, training, and running artificial intelligence models. It combines specialized compute like GPUs, high-performance storage resources, networking, software for orchestrating, managing, and monitoring AI workloads—all operating in a highly secure global environment—to deliver the power AI workloads require without the limits of traditional clouds or on-premise hardware.

Unlike general-purpose cloud platforms, AI clouds are designed to handle the massive data movement, parallel processing, and dynamic scaling that modern machine learning demands. They enable organizations to train large models faster, deploy inference workloads efficiently, and collaborate across distributed teams with secure access to shared resources.

AI clouds are where the next generation of intelligent systems is being built, and they’re flexible, elastic, and engineered specifically for AI.

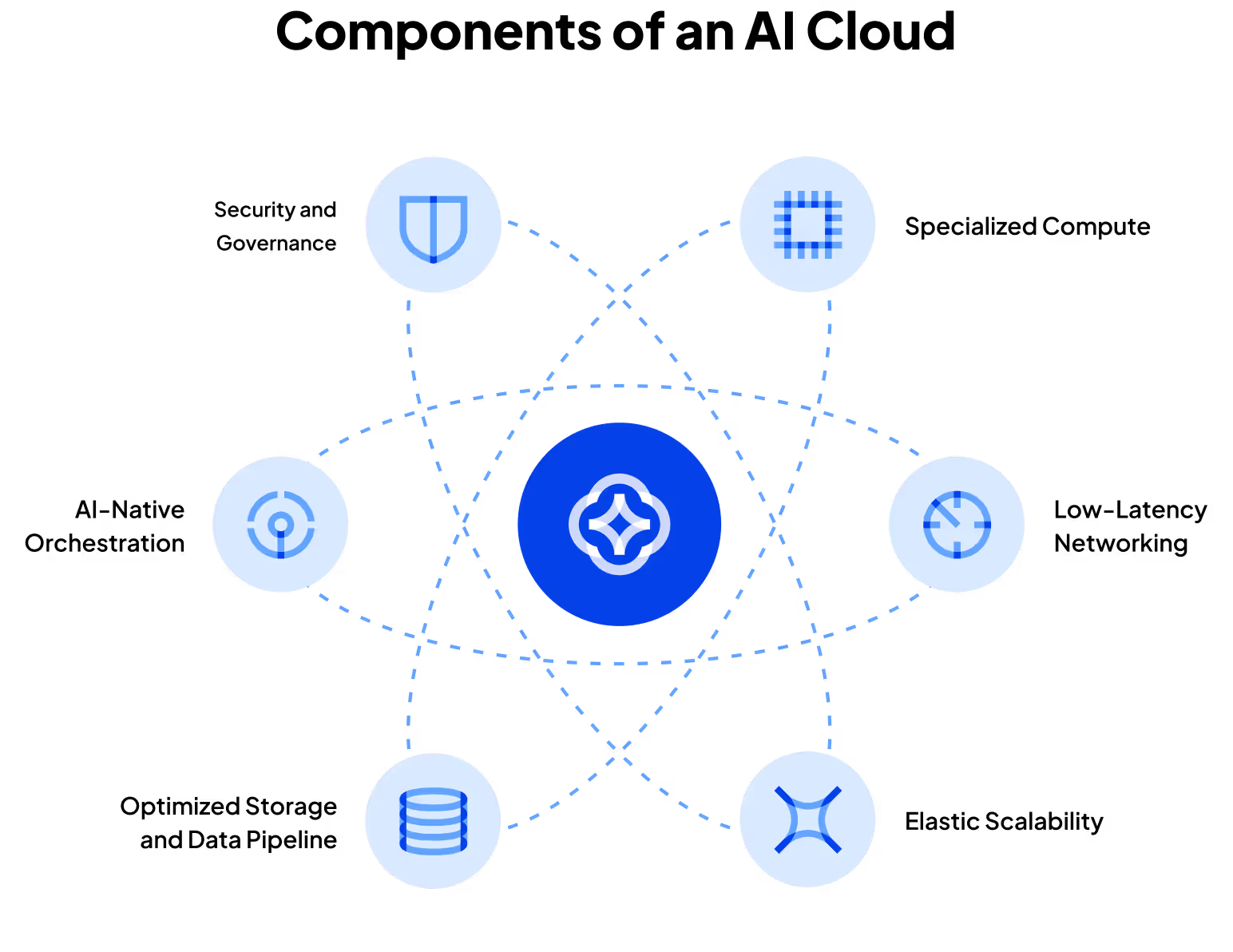

Key features of an AI cloud

An AI cloud is more than just rented compute power. It is a purpose-built environment that combines infrastructure, software, and orchestration tools to support the full AI development cycle, from data preparation and model training to deployment and monitoring. Its value lies in how seamlessly these layers interact to deliver the scale, speed, and flexibility that AI workloads demand.

In a traditional data center, these components often exist in silos: Storage is separated from compute, orchestration is managed manually, and scaling is handled through complex provisioning. AI clouds unify these layers under a single software-defined framework, giving developers and researchers the ability to move quickly and experiment freely without managing hardware constraints.

Specialized compute

AI workloads rely on high-performance processors built for parallel computation. AI clouds primarily utilize GPU clusters, which serve as the core engines of modern AI due to their ability to efficiently handle large-scale matrix operations and gradient calculations. Unlike general-purpose clouds, an AI cloud optimizes GPU performance for the specific AI task, such as removing layers of abstraction and adding custom software. This combination ensures that both model training and inference can run effectively at scale.

Optimized storage and data pipeline

AI development depends on rapid movement of large, unstructured datasets. AI clouds feature high-throughput storage systems that support parallel reads and writes, as well as data streaming from multiple sources. Integrated data pipelines manage ingestion, preprocessing, and caching so that data scientists can feed models continuously without waiting for transfers or file conversions.

Low-latency networking

Training a large model often requires dozens or even hundreds of nodes to exchange data constantly. Low-latency, high-bandwidth interconnects minimize bottlenecks during distributed training, reducing the time it takes to synchronize parameters across nodes. This networking layer ensures that scaling out computation does not come at the expense of performance or accuracy.

Elastic scalability

AI workloads are dynamic: they run continuously for days during long training cycles and bursty tasks like inference, often switching dynamically between training and inference. Elastic scaling allows teams to spin up thousands of GPUs for long- or short-term projects and then release them immediately afterward. This flexibility removes the need for fixed capital investment and lets organizations match infrastructure to workload demand in real time.

AI-native orchestration

Containerization and workload scheduling are central to efficient GPU utilization. AI clouds provide orchestration layers that automatically allocate resources, manage dependencies, and optimize jobs across clusters. This ensures greater GPU utilization and prioritizes compute cycles for active workloads. Developers can deploy frameworks like PyTorch or TensorFlow with minimal setup and reproduce environments reliably across teams.

Security and governance

As AI models grow more valuable, the need for secure infrastructure increases to protect IP and data. AI clouds implement strong identity management, encryption, and access control measures to protect intellectual property and training datasets. Governance tools provide audit trails and compliance reporting, allowing organizations to meet regulatory requirements while maintaining flexibility and transparency.

Together, these elements create an environment tuned for continuous innovation. Data scientists and engineers can spend less time configuring infrastructure and more time refining models, improving accuracy, and delivering real-world results. In this way, AI clouds become not only a technical foundation but also a strategic accelerator for building intelligent systems at scale.

Hyperscalers vs. neoclouds vs. AI clouds

Not all clouds are built the same. As AI workloads have grown more complex, the limitations of traditional cloud infrastructure have become clear. Systems built for general computing often struggle to handle the data movement, parallelism, and scalability that modern AI models require.

This demand has led to a new generation of cloud architectures that are tailored for high-performance training and inference. Today’s AI landscape spans three main types of providers, each with its own strengths, trade-offs, and design priorities.

Hyperscalers

Hyperscalers are large public clouds offering broad infrastructure and platform services across a global data center footprint. Their strength lies in massive scale, mature ecosystems, and extensive service catalogs that cover everything from storage and analytics to machine learning APIs.

However, their generalized architectures are not optimized for high-intensity AI workloads, which can lead to inefficiencies and higher costs when training or deploying large models. Organizations often turn to hyperscalers for global reach, enterprise integration, and reliability, but may face complexity in fine-tuning performance for AI-specific demands.

Neoclouds

Neoclouds are emerging providers that offer GPU-as-a-Service for specific workloads, such as AI, visual effects, or scientific computing. Neoclouds tend to build leaner, more standardized, delivery-in-a-box services for AI jobs that emphasize speed and price.. They typically offer simpler pricing, faster provisioning, and direct access to high-performance GPUs or accelerators without the overhead of legacy systems.

This specialty focus enables greater agility compared to hyperscalers, but they can come with trade-offs, such as smaller global footprints or fewer ancillary services. Neoclouds also generally rely on virtualization which lowers performance for AI workloads. Finally, neoclouds may feature less enterprise-ready solutions and scale, which can limit the ability to scale on its own platform or beyond.

AI clouds

AI clouds are purpose-built environments engineered specifically for artificial intelligence workloads, from large-scale model training to low-latency inference. AI clouds integrate dense GPU clusters, optimized networking, and AI-native orchestration tools that manage workloads automatically for maximum utilization. These platforms are designed to minimize idle time, accelerate experimentation, and simplify scaling for teams that live and breathe machine learning.

While hyperscalers represent the traditional multipurpose cloud model and neoclouds blend that flexibility with performance-focused design, AI clouds narrow the focus to deep learning and model development. Because they are specialized by design, AI clouds often deliver the highest performance-per-dollar ratio for AI workloads but are generally narrower in scope than multipurpose clouds.

Understanding the distinctions between these environments helps teams align infrastructure with their goals, whether that means global reach, workload specialization, or peak performance.

As the boundaries between these categories continue to blur, many organizations are adopting hybrid strategies that combine the reach of hyperscalers with the agility of neoclouds and the precision of AI-specific environments. This shift reflects a broader truth: The choice of cloud provider is no longer just about infrastructure but about how efficiently and intelligently it can power the next wave of AI innovation.

Why use the cloud for AI?

The rise of AI has redefined what infrastructure must do. Training and deploying modern models require enormous computational power, continuous data movement, and near-constant iteration. Building and maintaining that level of capacity on-premises is costly, complex, and often impractical for most organizations. The cloud offers a dynamic alternative that scales with demand, supports global collaboration, and makes cutting-edge resources accessible to organizations of all sizes.

At its core, the cloud enables AI teams to focus on research and innovation instead of infrastructure management. Developers can train models on thousands of GPUs, spin up clusters in minutes, and experiment with new architectures without waiting for hardware procurement or configuration.

Key benefits of using the cloud for AI:

Speed

Distributed compute dramatically accelerates training cycles. Tasks that once required weeks of local processing can now be completed in hours by distributing workloads across hundreds of GPUs or nodes. Faster iteration enables faster breakthroughs, whether tuning a vision model or refining a large language model’s parameters.

Scalability

An AI cloud adapts to any workload in real time. Teams can scale resources up or down as demand changes, keeping infrastructure efficient. Training typically requires large, sustained compute for longer periods, while inference scales more rapidly as user requests increase or decline.

Collaboration

AI is a team sport. Cloud platforms allow researchers, engineers, and data scientists across the world to access shared datasets, model checkpoints, and versioned codebases in real time. Centralized environments help reduce friction in development pipelines and improve reproducibility across distributed teams.

Cost efficiency

Owning AI hardware requires significant upfront investment in GPUs and infrastructure. AI clouds convert that cost into operational expenses, allowing teams to pay only for what they use. This avoids large purchases, reduces depreciation risk as hardware evolves, and allows instant scaling without long procurement cycles.

Innovation velocity

Cloud-native tools and APIs make it easier to experiment with new frameworks, deploy models into production, and integrate with data pipelines or edge systems. This speed from idea to execution enables teams to iterate more often, fail faster, and reach successful outcomes sooner.

Security

AI clouds have security built with enterprise-grade protection into every layer of the platform, with hardware isolation, real-time identity control, and continuous verification, ensuring that AI workloads are not just fast but also secure.

These cloud advantages make AI development not only feasible but sustainable at scale. It turns the challenge of infrastructure into a catalyst for discovery, ensuring that innovation is limited only by imagination, not by hardware.

AI cloud use cases and real-world applications

The value of an AI cloud becomes most tangible when in action. From large-scale research to real-time decision systems, AI clouds operate as the backbone of innovation across industries. They make it possible to train, fine-tune, and deploy models that were once beyond the reach of even the largest on-premise data centers.

Examples of how AI clouds are being used today:

- Generative AI and large language models: train and host foundation models for chatbots, image generation, and writing assistants using large-scale GPU compute

- Media and entertainment: accelerate rendering, visual effects, and virtual production with dense GPUs and elastic scaling to reduce production time

- Healthcare and life sciences: enable precision medicine, drug discovery, and diagnostics through large-scale genomic analysis, imaging, and simulation

- Financial services: power fraud detection, algorithmic trading, and risk modeling with real-time data analysis and adaptive AI models

- Scientific research and climate modeling: run large-scale simulations and analyze complex systems with accessible high-performance AI infrastructure

- Manufacturing and robotics: support computer vision, predictive maintenance, and autonomous systems with cloud-based training and edge inference

Across these applications, AI clouds enable a new level of collaboration, experimentation, and discovery. It transforms infrastructure into a creative engine for solving problems that span industries and disciplines.

How the cloud is evolving for AI

An AI cloud continues to evolve as workloads become increasingly complex and models grow in size and sophistication. Early AI development relied heavily on static infrastructure and single-GPU configurations, but today’s models demand distributed compute, specialized hardware, and smarter orchestration at massive scale. These changes are driving a new generation of cloud innovation designed to meet the unique performance, sustainability, and accessibility needs of AI.

The evolution of an AI cloud is shaped by five key trends that are redefining how infrastructure is built, deployed, and managed:

Energy-aware scaling

Power consumption is one of the biggest challenges in modern AI. To address this, AI clouds are increasingly designed around energy-efficient architectures, liquid cooling systems, and intelligent workload scheduling that help minimize waste. Some are also incorporating renewable energy sources and carbon tracking to help organizations align their compute usage with sustainability goals. This shift reflects a broader industry focus on balancing performance with environmental responsibility.

Data-centric optimization

Traditional architectures that move data to compute are giving way to designs that bring compute closer to data. By colocating compute, storage, and high-throughput networking, AI clouds reduce latency, minimize network overhead, and accelerate distributed training. AI-native storage, caching, and data pipelines enable massive datasets to flow seamlessly into training and inference workloads, eliminating bottlenecks as AI models become more dependent on real-time, data-intensive operations.

Software innovation

The software stack behind AI infrastructure is evolving as quickly as the hardware. Modern AI clouds feature orchestration frameworks, AI-native operating systems, and model-serving platforms that automate deployment, scaling, and version control. These tools simplify the transition from training to production, reduce configuration complexity, and make it easier for teams to deploy models across multiple environments. Continuous optimization at the software layer ensures that developers can focus on building intelligence rather than managing infrastructure.

Hybrid and edge integration

As AI applications move beyond the data center, the need for hybrid and edge deployment is growing. AI clouds extend capabilities to on-premise environments, enterprise data centers, and even edge devices. This allows models trained in the cloud to be deployed closer to where data is generated, whether that’s a factory floor, a hospital, or an autonomous vehicle. The result is lower latency, improved responsiveness, and a more connected AI ecosystem.

These advancements point toward a future where an AI cloud becomes the default foundation for intelligent systems, spanning research, development, and real-world deployment. The lines between cloud, on-premise, and edge are blurring into a single, flexible continuum of compute. As this evolution continues, organizations that embrace adaptive, AI-optimized infrastructure will be best positioned to innovate faster, deploy smarter, and lead in the next era of intelligent computing.

The future of AI cloud

Although AI cloud is still in its early stages, its trajectory is clear. It is evolving from an infrastructure solution into a fully intelligent platform that can optimize itself, anticipate needs, and integrate seamlessly with emerging technologies. As AI models grow more capable, the clouds that power them must also adapt.

In the near future, AI clouds will likely:

- Self-optimize performance and cost: adaptive orchestration systems will automatically allocate resources based on workload behavior, ensuring peak efficiency without manual tuning

- Unify the compute continuum: the distinction between cloud, on-premise, and edge will fade as workloads move fluidly between environments

- Expand accessibility: tools that once required deep technical knowledge will become available through intuitive, low-code interfaces and managed AI pipelines

- Enhance interoperability: open-source frameworks and cross-cloud standards will make it easier to train and deploy models across different platforms without starting from scratch

- Align with global sustainability goals: energy-efficient hardware, carbon-aware scheduling, and renewable-powered data centers will become key differentiators for responsible AI infrastructure

Looking ahead, AI clouds will serve as the foundation for an interconnected ecosystem where models, data, and compute coexist in harmony. It will not only accelerate innovation but also democratize it, empowering researchers, startups, and enterprises alike to participate in building the next generation of intelligent systems.